The Intelligence Factory: Global AI Ecosystem and Strategic Briefing for 24 March 2026

The global artificial intelligence landscape on 24 March 2026 has transitioned from the era of experimental large language models to a period of industrialized agentic swarms and integrated silicon factories. The news cycle today is dominated by the simultaneous maturation of hardware-software co-design, represented by the NVIDIA Vera Rubin platform, and the emergence of autonomous agentic frameworks that are redefining the relationship between human intent and machine execution. This intelligence report synthesizes the core developments in hardware infrastructure, financial engineering, national policy, and frontier research to provide a comprehensive analysis of the AI ecosystem's current state.

The Silicon Substrate: The Rise of the AI Super-Factory

The industrialization of AI has reached a definitive milestone today with the full-scale rollout and partner availability of the NVIDIA Vera Rubin platform, a system that fundamentally reimagines the data center as a single, cohesive computational entity rather than a collection of modular servers.[1, 2] This architectural shift is necessitated by the "four scaling laws" of modern AI: pretraining, post-training, test-time scaling, and agentic scaling.[1] Unlike previous generations which focused primarily on raw throughput for training, the Vera Rubin platform is co-designed from the grid to the chip to support the low-latency, high-bandwidth demands of persistent, autonomous agents.[2]

The Vera Rubin platform comprises seven distinct chip types, including the Vera CPU, Rubin GPU, and the newly integrated Groq 3 LPX inference accelerator.[2, 3] The integration of the Groq 3 LPX is particularly significant, as it provides a dedicated layer for deterministic, low-latency inference that eliminates the traditional tradeoff between interactivity and throughput in trillion-parameter models.[1, 2] By utilizing large-scale SRAM-only language processing units alongside HBM4-equipped GPUs, the system can maintain massive context windows while delivering tokens at speeds required for human-like interaction.[1, 2] Why this matters: The transition to integrated POD-scale architectures signals that the bottleneck for AI progress has shifted from individual chip performance to the efficiency of the entire data center stack, requiring a level of hardware-software synergy that favors vertically integrated providers.

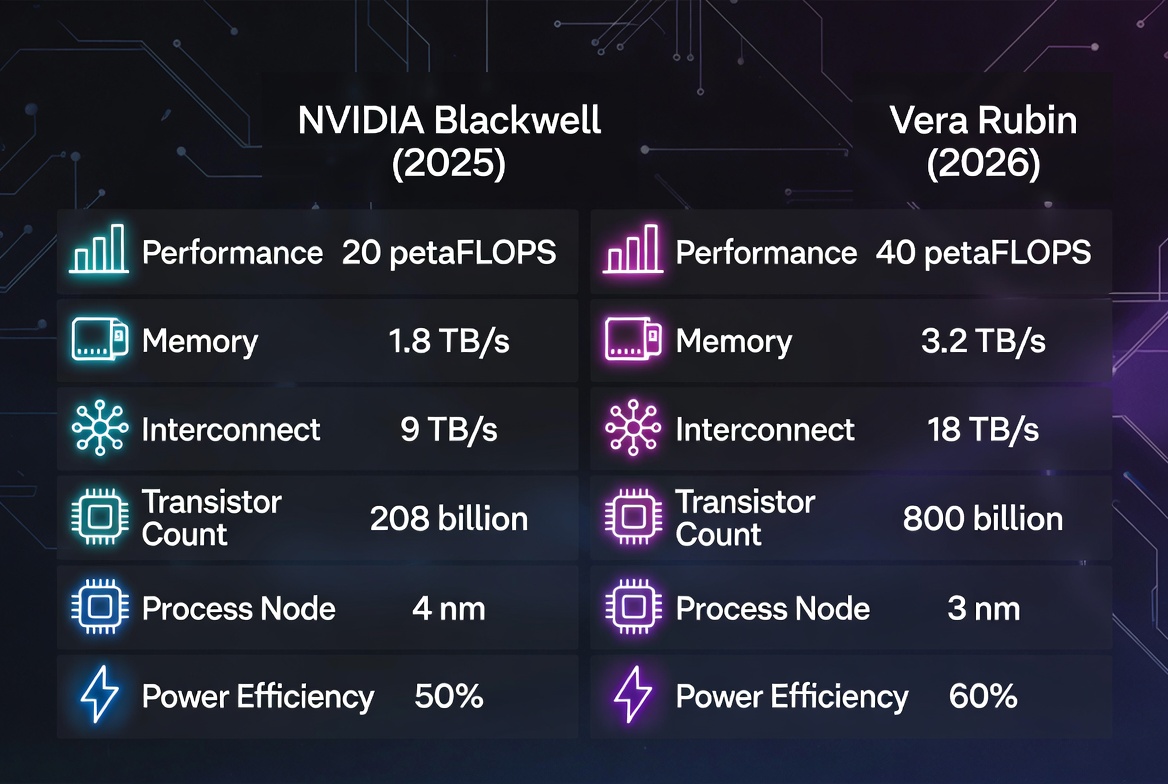

Technical Specifications of the NVIDIA Vera Rubin Platform

Feature | NVIDIA Blackwell (2025) | NVIDIA Vera Rubin (2026) | Performance Impact |

|---|---|---|---|

Core Architecture | Multi-chip Module | Extreme POD-Scale Co-design | Unified System Reasoning |

Transistor Count (POD) | N/A | 1.2×1015 | 10× Complexity Scaling |

Compute Die Performance | 10 PFLOPS (NVFP4) | 50 PFLOPS (NVFP4) | 5× Inference Throughput |

Memory Technology | HBM3e | HBM4 (Up to 288GB) | Enhanced Context Retention |

Interconnect Bandwidth | 1.8 TB/s (NVLink 5) | 3.6 TB/s (NVLink 6) | 2× GPU Communication |

CPU-GPU Link (C2C) | 900 GB/s | 1.8 TB/s | Seamless Data Movement |

Networking Switch | 51.2 Tb/s (Spectrum-X) | 102.4 Tb/s (Spectrum-6) | 2× Network Fabric Speed |

AI Storage Layer | Standard SSD | BlueField-4 STX (CMX) | 6× Compute Acceleration |

Data synthesized from NVIDIA technical releases and developer documentation.[1, 2, 3, 4]

The architectural core of the system, the Vera Rubin NVL72, functions as a single giant GPU by connecting 72 Rubin GPUs and 36 Vera CPUs through a massive copper NVLink spine.[1] This design significantly reduces energy consumption compared to traditional optical transceivers while providing the necessary bandwidth for Mixture-of-Experts (MoE) routing and heavy compute-bound context phases.[1, 3] Furthermore, the introduction of the NVIDIA DSX platform allows for dynamic power provisioning, enabling data centers to deploy up to 30% more infrastructure within existing power constraints.[2] Why this matters: As the energy demands of AI factories reach gigawatt-scale, the ability to maximize "tokens per watt" through physical AI and digital twins has become the primary competitive advantage for cloud providers and sovereign nations.

Support for the Rubin platform is already widespread among the world's leading AI labs and cloud service providers, including Amazon Web Services, Google Cloud, Microsoft Azure, and Oracle Cloud Infrastructure.[2, 4] Microsoft’s next-generation "Fairwater" AI super-factories are expected to scale to hundreds of thousands of Vera Rubin Superchips, providing the compute substrate for the next generation of reasoning models from OpenAI.[4] This level of commitment from hyperscalers confirms that the industry is no longer betting on incremental improvements, but on a total overhaul of the global computing infrastructure.[4, 5] Why this matters: The concentration of compute power into a few multi-gigawatt facilities creates a "compute moat" that reinforces the dominance of incumbent platforms while simultaneously creating new vulnerabilities in the global energy grid.

Capital and Financial Engineering: The $1 Trillion IPO Horizon

The financial narrative today is centered on OpenAI's sophisticated capital-raising strategies as it prepares for an initial public offering that could value the company at up to $1 trillion.[6] OpenAI has reportedly reached an annualized revenue milestone of $25 billion, a figure that highlights the rapid commercialization of generative AI since late 2022.[6] However, the company's financial disclosures also reveal a "standard legal risk factor": a substantial dependency on Microsoft for both financing and compute resources.[6] The documents warn that any modification to this partnership could severely impact OpenAI’s prospects and operating results.[6] Why this matters: The transition of AI startups to public markets requires them to reconcile their high-growth narratives with the reality of their massive infrastructure costs and platform dependencies, leading to increasingly complex financial structures.

To address these cost pressures and accelerate enterprise adoption, OpenAI is courting private equity firms like TPG, Bain Capital, and Advent International to form multi-billion dollar joint ventures.[7] In a move to sweeten the pitch against its rival Anthropic, OpenAI is offering preferred equity stakes with a guaranteed minimum return of 17.5%.[7] This strategy aims to raise $4 billion to fund the deployment of engineers who will customize AI models for large corporate clients.[7] Unlike Anthropic, which has reportedly not offered such guaranteed returns, OpenAI is prioritizing rapid market share capture through aggressive financial incentives.[7] Why this matters: The "turf war" for enterprise desks has shifted from model performance to financial engineering, as AI companies seek to embed their technology into corporate workflows before competitors can establish a foothold.

Comparative Financial Strategies: OpenAI vs. Anthropic

Metric | OpenAI Strategy (2026) | Anthropic Strategy (2026) |

|---|---|---|

Target PE Partners | TPG, Bain, Advent, Brookfield | Blackstone, Hellman & Friedman, Permira |

Financing Round | ~$4 Billion (JV) | Enterprise-focused buyout round |

Preferred Stake Return | 17.5% Guaranteed Minimum | No guaranteed return reported |

Valuation Aim | $1 Trillion Potential IPO | ~$380 Billion (Post-money Feb) |

Key Revenue Driver | Professional Implementation & Co-ownership | Sovereign/Regulated Cloud Focus |

Compute Partner | Microsoft / Amazon / Oracle | Amazon / Google |

Analysis synthesized from Reuters and Business Times reporting.[6, 7, 8]

The broader venture capital market is reflecting this concentration of capital. In the first two months of 2026, AI startups raised a staggering $220 billion, with a significant portion of that total flowing into just a few players.[8] In February alone, the combined rounds of OpenAI, Anthropic, and Waymo represented 83% of all global venture capital.[8] This "capital tilting" suggests that the market is moving away from a broad startup boom and toward a "platform era" where a handful of companies control the essential infrastructure of AI.[8] Why this matters: The extreme concentration of funding creates a barrier to entry for new startups, forcing them to either focus on niche vertical applications or become dependent on the "big three" for compute and distribution.

Evidence of this verticalization is visible in smaller funding rounds as well. Kandou AI, a connectivity chip startup, raised $225 million from SoftBank and Synopsys to ramp up manufacturing of high-performance chips that connect GPUs more cheaply using copper technology.[9] Meanwhile, Novaworks secured $8 million in seed funding, led by Stalwart Ventures with participation from ServiceNow Ventures, to build an AI-native operating system for "Total Workforce Management".[10] This indicates that even as mega-rounds dominate the headlines, strategic capital is still flowing into the "connective tissue" of the AI economy: the hardware that links chips and the software that manages human-AI collaboration.[9, 10, 11] Why this matters: The success of specialized hardware and "agentic OS" startups suggests that the next phase of growth will be defined by the efficiency of the "human-in-the-loop" interface and the physical infrastructure that supports it.

National Policy: The Federal Preemption Shift

A pivotal moment in AI governance occurred today with the White House release of the "National Policy Framework for Artificial Intelligence".[12, 13] This framework provides a set of legislative recommendations designed to establish a uniform federal regulatory environment, effectively preempting a "patchwork" of state laws that the administration argues would hinder national innovation and global dominance.[13, 14] The framework addresses several high-priority areas, including child safety, energy costs, and the protection of intellectual property.[13] Why this matters: The federal government's move to override state-level AI regulations marks a shift toward a "national champion" strategy, prioritizing the speed of AI deployment over localized regulatory caution.

One of the most contentious aspects of the framework is its approach to child safety and parental empowerment.[15] The White House is calling on Congress to mandate that AI companies provide parents with "robust tools" to manage privacy settings, screen time, and content exposure.[13, 15] This includes the implementation of "commercially reasonable, privacy-protective age-assurance requirements" and features to reduce the risk of sexual exploitation and self-harm.[13, 14, 15] Crucially, while the framework seeks to preempt many state AI laws, it explicitly advises against preempting state laws that protect children online.[15] Why this matters: By shielding child safety laws from federal preemption, the administration is attempting to balance its pro-innovation agenda with the intense public and political pressure to protect minors from algorithmic harms.

Pillars of the National Policy Framework for AI

Pillar | Key Objective | Mechanism of Implementation |

|---|---|---|

Child Safety | Empowering parents & protecting minors | Age-assurance mandates & content controls |

Infrastructure | Promoting "Energy Dominance" | Federal permitting & ratepayer protections |

Intellectual Property | Protecting creators & publishers | Licensing frameworks & digital replica laws |

Free Speech | Preventing censorship | Prohibitions on federal content coercion |

Economic Growth | Removing barriers to innovation | Regulatory sandboxes & SME support |

Governance | Uniform federal standard | Preemption of "undue" state burdens |

Compiled from White House legislative recommendations.[12, 13, 14]

The framework also addresses the intersection of AI and the national energy grid. It calls for streamlined federal permitting for AI infrastructure but balances this with a "Ratepayer Protection Pledge," which would legally require that residential electricity costs do not increase as a result of new AI data center construction.[13] Furthermore, it proposes the use of "regulatory sandboxes" to allow for experimentation in specific sectors without the need for a new federal AI regulatory agency.[12, 14] Why this matters: The focus on energy costs and regulatory sandboxes indicates that the federal government views AI as an industrial policy challenge as much as a technological one, requiring a balance between massive infrastructure build-out and consumer protection.

In the realm of intellectual property, the administration suggests that while AI training may be permissible, Congress should enable (but not require) licensing frameworks that allow rights holders to negotiate compensation collectively without incurring antitrust liability.[13] It also seeks to protect individuals from the unauthorized use of their "identifiable attributes" through AI-generated digital replicas.[13, 14] Why this matters: These recommendations reflect an attempt to modernize IP law for the generative era without stifling the data-hungry training processes that fuel frontier models.

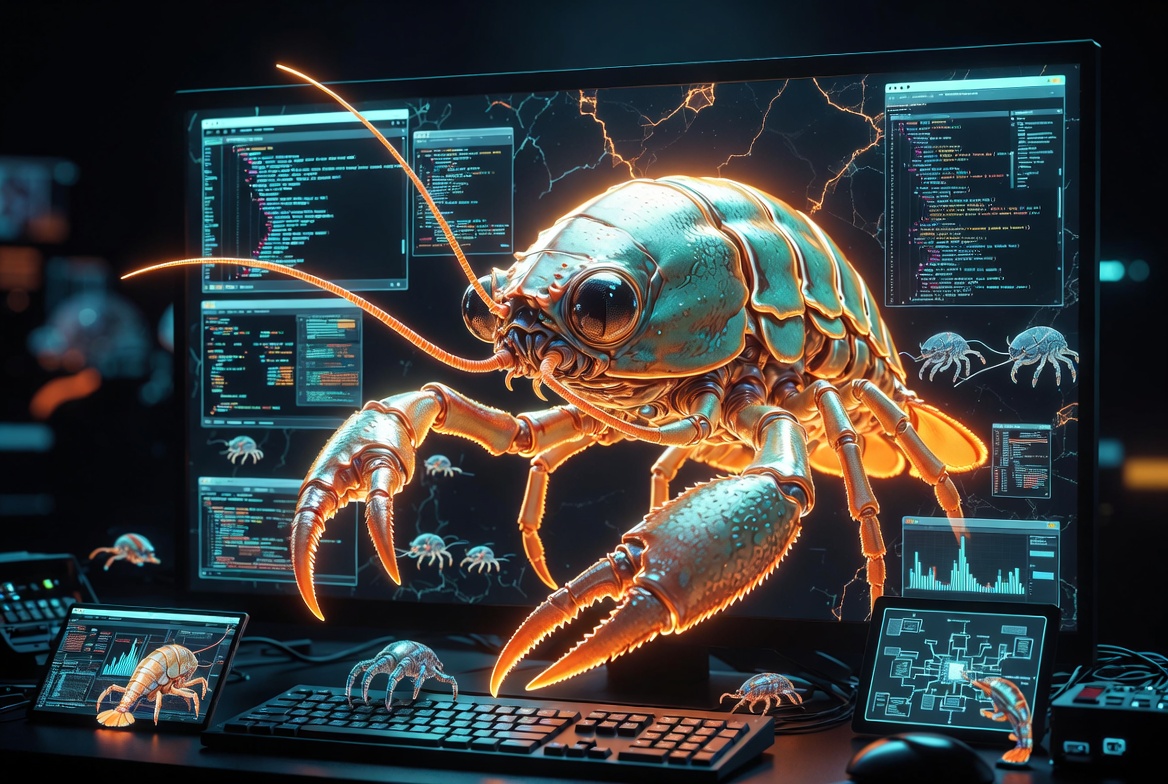

The Agentic Frontier: OpenClaw and the "Lobster" Phenomenon

The most disruptive trend in the AI ecosystem today is the viral explosion of the "OpenClaw" framework, an open-source tool that allows users to create autonomous AI agents capable of executing tasks directly on their computers.[16, 17] Created by developer Peter Steinberger, OpenClaw (originally Clawdbot) has achieved over 250,000 GitHub stars in just 60 days, a rate of adoption that surpasses the historical growth of Linux or React.[16, 18] These agents, affectionately nicknamed "claws" or "lobsters," can read and write files, run shell commands, browse the web, and even spawn sub-agents to handle complex goals.[16, 17] Why this matters: OpenClaw represents a shift from AI as a conversational assistant to AI as a "programmable digital worker," effectively lowering the barrier for the automation of professional workflows.

The phenomenon has taken particularly strong root in China, where "raising a lobster" has become a popular term for deploying and training these agents on local hardware.[19, 20] Chinese tech giants like Baidu, Alibaba, and Tencent have moved quickly to adopt the framework, rolling out "lobster" products for cloud, desktop, and mobile environments.[21] Baidu’s suite includes the DuMate desktop assistant and the RedClaw mobile platform, which leverage the "Programmatic Tool Calling" (PTC) mechanism and the long-context capabilities of the latest models to perform multi-step tasks across different applications.[20, 21] Why this matters: The rapid adoption of OpenClaw in China highlights a cultural and technical shift toward "embodied AI" and autonomous hardware control, areas where China is racing to establish a structural edge.

Comparative Landscape of Agentic AI Frameworks

Framework | Developer / Origin | Primary Focus | Key Technical Feature |

|---|---|---|---|

OpenClaw | Peter Steinberger (Open Source) | Local autonomous execution | Nested sub-agent spawning |

NemoClaw | NVIDIA | Secure, managed agents | Single-command runtime [22] |

Oracle AI Database | Oracle | Enterprise data-driven agents | Private Agent Factory (No-code) [23] |

Manus | Independent | Tool use & context memory | High-context PTC integration [20] |

PandaClaw | Insilico Medicine | Scientific drug discovery | Multi-agent bioinformatics [24] |

Mistral Forge | Mistral AI | Custom corporate models | Support for custom domain data [22] |

Analysis synthesized from developer blogs and industry reports.[16, 20, 22, 23, 24]

Oracle’s entry into this market with its "Oracle AI Database" innovations underscores the enterprise demand for secure, data-driven agents.[23] By integrating agentic AI directly into the database layer, Oracle allows companies to build agents that access real-time enterprise data without sharing it with third-party providers.[23] Innovations like the "Unified Memory Core" and "Deep Data Security" ensure that agents can reason across different data types—such as vector, JSON, and graph—while strictly adhering to user-specific data access rules.[23] Why this matters: The move to put agents "inside the database" solves the dual problem of data security and context latency, providing a more robust path for AI integration in highly regulated industries.

However, the rapid proliferation of autonomous agents is not without significant risks. Security researchers have noted that because OpenClaw agents can autonomously install tools and write code, the attack surface is vast.[16, 17] Malicious "skills" have already been discovered on the ClawHub marketplace, targeting everything from personal credentials to cryptocurrency wallets.[16, 17] Governments and organizations like the UK AI Safety Institute have warned that agents could compound reliability risks because they operate with greater autonomy, making it harder for humans to intervene before a failure causes harm.[25, 26] Why this matters: The same autonomy that makes agents powerful also makes them dangerous; the next major challenge for the ecosystem will be developing "guardrails for agency" that can prevent autonomous systems from being weaponized or failing catastrophically.

Scientific and Medical Advances: The Precision Revolution

The life sciences sector is experiencing a rapid transformation through the application of agentic AI and specialized high-throughput platforms. Today, Verily Life Sciences and Samsung Electronics America announced a major collaboration to integrate sensor data from the Samsung Galaxy Watch 8 into Verily’s precision health platform.[24] This "integrated solution" allows research sponsors to monitor real-world populations and generate high-fidelity evidence through wearable devices, potentially revolutionizing the speed and accuracy of clinical trials.[24] Why this matters: The convergence of consumer wearables and clinical-grade platforms creates a "continuous monitoring" paradigm that could significantly improve early disease detection and the management of chronic conditions.

In the diagnostic field, PathAI has received FDA Breakthrough Device Designation for "PathAssist Derm," an AI tool designed to analyze digital pathology images of skin lesions.[24] This tool aims to assist pathologists in managing rising caseloads while maintaining diagnostic rigor through advanced AI assessment.[24] Simultaneously, Research Solutions launched "Scite MCP," which gives AI tools direct access to over 250 million scientific articles and datasets, allowing researchers to evaluate the "trustworthiness" of findings without leaving their AI environment.[24] Why this matters: By embedding scientific literature and diagnostic support directly into the AI workflow, these tools are reducing the cognitive burden on medical professionals and researchers, though they also introduce new questions about the "black box" nature of AI-assisted diagnosis.

Breakthroughs in Biotechnology and Medical AI (24 March 2026)

Organization | Innovation | Core Mechanism | Sectoral Impact |

|---|---|---|---|

Verily / Samsung | Integrated Health Solution | Wearable sensor data + Pre platform | Clinical Trial Efficiency |

PathAI | PathAssist Derm | FDA Breakthrough digital pathology | Skin Cancer Diagnosis |

Insilico Medicine | PandaClaw | Agentic bioinformatics engine | Drug Target Discovery |

Nautilus Biotech | Voyager Platform | Iterative mapping of 10B proteins | Mass Proteomics |

Nicoya Lifesciences | FastHDX | Millisecond protein dynamics MS | Drug Design / Interrogation |

Dalriada / Topos | IDP Drug Discovery | Mass spectrometry + Chemoproteomics | Difficult-to-treat Targets |

Data compiled from Bio-IT World and corporate announcements.[24]

The technical depth of these advancements is matched by their scale. Nautilus Biotechnology debuted its "Voyager Platform," designed to simultaneously map up to 10 billion intact proteins in a single run.[24] In the pharmaceutical domain, a new collaboration has produced "LFM2-2.6B-MMAI," a single checkpoint model trained to perform across multiple drug discovery subdomains, rather than a patchwork of separate models.[24] This model addresses the critical challenge of how pharmaceutical companies can harness AI without exposing proprietary molecules to external cloud services.[24] Why this matters: The move toward "on-prem" or secure-cloud pharmaceutical AI models suggests that data sovereignty is becoming a primary constraint in the race for new therapeutics.

Despite these technical leaps, the human element remains a critical factor. Research from the University of Michigan and Michigan State University published today found that while patients are open to medical AI—especially if it outperforms general practitioners—they still overwhelmingly desire the presence of a human doctor to oversee the process.[27] Trust is highest when the AI has formal FDA approval, national certifications, and was trained on high-quality, representative data.[27] Why this matters: The adoption of medical AI is not just a technological hurdle but a bioethical and psychological one; clinicians must be trained not just to use AI, but to act as the "trust bridge" between the algorithm and the patient.

The European Context: Sovereign Stacks and Regulatory Milestones

Europe continues to carve out a distinct identity in the global AI ecosystem by prioritizing data sovereignty and explainable AI. Mistral AI, based in Paris, has launched "Mistral Forge," a platform that allows enterprises to build custom AI models trained from scratch on their own data.[22, 28] This provides a more controlled alternative to traditional fine-tuning or RAG (Retrieval-Augmented Generation), with the company positioning it as a tool for "technological sovereignty".[22, 28] Mistral also released "Mistral Small 4," a major reasoning-optimized model that employs a 119-billion parameter Mixture of Experts architecture.[22, 28] Why this matters: Mistral’s growth—with revenue surging from $20 million to over $400 million in one year—indicates a strong global appetite for alternatives to U.S. proprietary models that prioritize European data laws.

The European regulatory environment is also reaching a critical "compliance cliff." The EU AI Act is already in force, and as of today, prohibited AI practices—such as harmful manipulation and social scoring—carry fines of up to 7% of global annual revenue.[29, 30] The next major milestone is 2 August 2026, when full compliance requirements for "high-risk" systems like hiring algorithms and biometric identification will activate.[29, 30] To assist with this transition, the European Commission has published the second draft of its "Transparency Code of Practice," which outlines how providers must mark and label AI-generated content (deepfakes) and ensure that their systems are detectable as artificially generated.[31, 32] Why this matters: The EU’s rigorous transparency requirements are forcing global AI providers to rethink their content authentication strategies, likely leading to a new global standard for "digital provenance."

Key European AI Providers and Models (2026)

Company | Location | Key Model / Tool | Primary Differentiator |

|---|---|---|---|

Mistral AI | France | Mistral Forge / Small 4 | Sovereign stack & open-weight models |

Aleph Alpha | Germany | Luminous | Explainability & trustworthy AI [33] |

DeepL | Germany | DeepL Write / Translate | Superior European language quality |

Black Forest Labs | Germany | FLUX series | High-performance image generation [33] |

Synthesia | UK | Avatar Video Platform | Enterprise-grade AI avatars [33] |

ElevenLabs | UK | ElevenAgents | Human-like voice synthesis [34] |

Wayve | UK | Embodied AI | Mapless autonomous driving AI [34] |

Synthesized from "European AI Tools Comparison" and corporate data.[33, 34, 35]

Specialized European startups are also achieving significant scale. Black Forest Labs, founded by the team behind Stable Diffusion, has reached a valuation of $3.25 billion with its FLUX image generation models, making it Germany's most valuable AI company.[33] Meanwhile, DeepL remains the "fastest way for organizations to achieve results," evolving from a translation service into a comprehensive language AI platform that emphasizes privacy by default.[33, 35] These companies are increasingly being viewed as strategic choices rather than just alternatives to U.S. giants, as they are "designed for European regulations from the ground up".[35] Why this matters: The maturity of European AI tools suggests that "data sovereignty" is becoming a competitive advantage, attracting companies that want to avoid the legal risks of processing customer data outside the EU.

Frontier Research: Self-Improvement and Structural Efficiency

The research landscape on 24 March 2026 is moving toward systems that can autonomously optimize their own architectures and learning processes. A prominent example is the "Darwin Gödel Machine" (DGM), which has demonstrated "open-ended self-improvement" in coding tasks.[36] By generating and evaluating self-modified variants of its own learning mechanisms, the DGM-Hyperagents (DGM-H) can accelerate progress on any computable task without relying on domain-specific human alignment.[36] This represents a shift from "handcrafted" AI systems toward "evolutionary" systems that can discover more efficient paths to intelligence than human engineers.[36] Why this matters: The emergence of self-improving agents marks the beginning of "recursive scaling," where gains in reasoning ability lead directly to gains in the ability to improve the system itself.

In terms of architectural efficiency, Moonshot AI has published research on "Attention Residuals" (AttnRes), a method that replaces fixed unit weights in transformer models with input-dependent learned weights.[22, 37] By reframing model depth as a form of attention, AttnRes allows deeper layers to "selectively aggregate" information from earlier layers, mitigating the dilution of gradients and hidden states that typically occurs as networks grow deeper.[22, 37] This approach has been integrated into the 48-billion parameter "Kimi Linear" architecture, resulting in more uniform output magnitudes and improved performance across all evaluated tasks.[37] Why this matters: As the industry pushes toward ever-deeper models, structural innovations like AttnRes are essential for maintaining training stability and reducing the computational cost of intelligence.

Top-Cited and Trending Research (arXiv March 2026)

Submission ID | Title / Theme | Focus Area | Core Insight |

|---|---|---|---|

arXiv:2603.21180 | Darwin Gödel Machine | Self-Improvement | Open-ended self-modification [36] |

Moonshot AI | Attention Residuals | Architecture | Learned layer accumulation [37] |

arXiv:2603.00285 | TraderBench | Finance | AI agent robustness in markets [38] |

MiroThinker v1.0 | Interaction Scaling | Agentic Behavior | RL-based multi-turn reasoning [37] |

arXiv:2602.01078 | AutoHealth | Medicine | Uncertainty-aware health modeling [39] |

Compiled from arXiv and paper-trending platforms.[36, 37, 38, 39]

Further research into "interaction scaling" is showcased by "MiroThinker v1.0," an open-source research agent designed to advance tool-augmented reasoning.[37] Unlike previous models that focused on model size or context length, MiroThinker systematically trains for deeper and more frequent agent-environment interactions.[37] The researchers found that "interaction depth" exhibits scaling behaviors analogous to traditional compute scaling, allowing the model to perform up to 600 tool calls per task for complex research workflows.[37] Why this matters: Interaction scaling provides a new dimension for AI performance, suggesting that the "intelligence" of an agent is as much about how it uses its tools as it is about the size of its neural network.

The security of these research-driven agents is also being scrutinized. "TraderBench" evaluates the robustness of AI agents in "adversarial capital markets," highlighting the risks of deploying autonomous systems in high-stakes financial environments where they may be vulnerable to sophisticated manipulation.[38] This body of work underscores a growing consensus: as AI systems gain the ability to act on the world, our evaluation metrics must shift from simple accuracy to "adversarial resilience" and "long-term behavioral stability".[25, 38] Why this matters: The maturation of "AI for Science" and "AI for Finance" requires a new set of benchmarks that can predict how autonomous agents will behave when faced with real-world complexity and bad actors.

Legal and Operational Insights: AI in Professional Services

The adoption of AI in the legal and corporate sectors has moved from exploration to structured implementation. Today, industry analyst Frank Ramos published a "52-week AI adoption plan for law firms," emphasizing that a strong rollout needs leadership, clear goals, and "guarded expansion".[40] This structural approach is a response to the "regulatory complexity and resource fatigue" reported by over 61% of compliance teams in 2026.[30] Law firms are increasingly turning to "Legal AI" startups to automate corporate functions, with these technologies now viewed as a "mature investment theme" rather than an experimental market.[41] Why this matters: The shift from "testing" to "deployment plans" indicates that AI is now a core operational requirement for professional service firms, where efficiency gains are being traded for the risk of algorithmic error.

In the realm of judicial procedure, the use of AI in video depositions is being flagged as a "treacherous" development.[42] Bad actors are using authentic deposition recordings to create fabricated footage for extortion or to undermine the credibility of witnesses.[42] To combat this, members of the Coalition for Content Provenance and Authenticity—including Adobe, Google, Microsoft, and OpenAI—are developing "content credentials" to cryptographically verify the authenticity of video.[42] Why this matters: The erosion of trust in digital evidence is creating a new market for "authentication-as-a-service," where the value of a legal document is tied to its cryptographic provenance rather than its visual appearance.

Compliance and Operational Trends (March 2026)

Trend | Data / Metric | Source / Context |

|---|---|---|

CFO ROI Rethink | Gartner Finance Symposium | Shift from cost-cutting to value-creation [43] |

Regulatory Fatigue | 61% of Compliance Teams | Managing multi-jurisdictional AI rules [30] |

Hiring AI Scrutiny | Aug 2026 EU Deadline | Strict audits for bias in recruitment [30] |

Robocall Mitigation | Belthrough LLC Shutdown | FCC enforcement on illegal AI calls [44] |

AI in Debt Collection | 3 attempts/week limit | FCC/FTC communication caps [44] |

AI Literacy Standards | WH Policy Recommendation | Workforce training as a federal priority [13] |

Synthesized from Gartner, Compliance Podcast Network, and FCC reports.[30, 43, 44, 45]

Compliance is also shifting from "detective" to "preventative" through the use of AI.[45] Companies are deploying AI for "advertising pre-check review" and to transform financial crimes compliance, using algorithms to identify patterns of risk before they manifest in violations.[45] However, the FCC is also tightening restrictions, particularly on offshore call centers and AI-driven robocalls.[44] A recent "Final Determination Order" confirmed the shutdown of Belthrough LLC for transmitting illegal robocalls, signaling that regulators are becoming more aggressive in their use of network-wide blocking for AI-enabled bad actors.[44] Why this matters: As AI tools become standard for both legitimate marketing and illegal spam, the regulatory battleground is moving to the "gateway" level, where providers are held liable for the traffic they transmit.

Strategic Outlook and Conclusion

The state of the AI ecosystem on 24 March 2026 reveals a landscape where the "frontiers" are no longer just about model size, but about systemic integration and autonomous agency. The hardware announcements from NVIDIA confirm that the AI factory is the new unit of global compute, requiring multi-gigawatt power and extreme co-design across every layer of the stack. Financially, the "turf war" between OpenAI and Anthropic highlights a move toward aggressive enterprise capture, supported by sophisticated private equity structures and guaranteed returns.

Policy-wise, the U.S. National Policy Framework marks a definitive move toward federal uniformity, prioritizing national competitiveness and child safety while preempting a potentially stifling patchwork of state laws. This is mirrored in Europe by the finalization of the EU AI Act’s transparency standards, which are setting the global stage for how AI content must be identified and authenticated. In the researcher's lab, the shift toward self-improving agents (DGM) and interaction scaling (MiroThinker) suggests that the next generation of AI will be characterized by its ability to learn from the world autonomously, rather than just predicting the next token in a static dataset.

For the professional world, the viral growth of OpenClaw and "lobsters" indicates that "agentic workflows" are becoming the default mode of operation. Whether in legal services, medical research, or workforce management, the challenge is no longer "if" AI should be used, but how to govern its autonomous actions. As we move toward the second half of 2026, the focus must shift from building powerful models to building "trustworthy agency"—systems that can act on our behalf without undermining the human oversight that remains, as patients and regulators alike insist, a non-negotiable requirement.

- NVIDIA Vera Rubin POD: Seven Chips, Five Rack-Scale Systems, One AI Supercomputer, https://developer.nvidia.com/blog/nvidia-vera-rubin-pod-seven-chips-five-rack-scale-systems-one-ai-supercomputer/

- NVIDIA Vera Rubin Opens Agentic AI Frontier, https://nvidianews.nvidia.com/news/nvidia-vera-rubin-platform

- Inside the NVIDIA Vera Rubin Platform: Six New Chips, One AI Supercomputer, https://developer.nvidia.com/blog/inside-the-nvidia-rubin-platform-six-new-chips-one-ai-supercomputer/

- NVIDIA Kicks Off the Next Generation of AI With Rubin — Six New Chips, One Incredible AI Supercomputer - NVIDIA Investor Relations, https://investor.nvidia.com/news/press-release-details/2026/NVIDIA-Kicks-Off-the-Next-Generation-of-AI-With-Rubin--Six-New-Chips-One-Incredible-AI-Supercomputer/default.aspx

- NVIDIA Vera Rubin DSX AI factory, Omniverse twin | NVDA Stock News, https://www.stocktitan.net/news/NVDA/nvidia-releases-vera-rubin-dsx-ai-factory-reference-design-and-1gjy9cryrrp6.html

- OpenAI warns Microsoft ties pose risk ahead of potential IPO, CNBC ..., https://www.businesstimes.com.sg/companies-markets/telcos-media-tech/openai-warns-microsoft-ties-pose-risk-ahead-potential-ipo-cnbc-reports

- OpenAI sweetens private equity pitch amid enterprise turf war with ..., https://www.thehindu.com/sci-tech/technology/openai-sweetens-private-equity-pitch-amid-enterprise-turf-war-with-anthropic-sources-say/article70778167.ece

- AI startup funding hits $220 billion in two months ... - eeNews Europe, https://www.eenewseurope.com/en/ai-startup-funding-hits-220-billion-in-two-months/

- Ex-Goldman banker's AI chip company secures SoftBank funding | Communications Today, https://www.communicationstoday.co.in/ex-goldman-bankers-ai-chip-company-secures-softbank-funding/

- Novaworks Secures $8M to Reinvent Enterprise Workforce Management with Agentic AI, https://bayelsawatch.com/novaworks-secures-8m/

- AI Startup Funding News Today – Latest Deals & Rounds 2026 - AI Funding Tracker, https://aifundingtracker.com/ai-startup-funding-news-today/

- White House releases national AI policy framework | AHA News, https://www.aha.org/news/headline/2026-03-20-white-house-releases-national-ai-policy-framework

- White House releases the National Policy Framework for Artificial ..., https://www.dlapiper.com/en-us/insights/publications/2026/03/white-house-releases-the-national-policy-framework-for-ai-key-points

- White House Releases National Legislative Policy Framework for AI - Wiley Rein, https://www.wiley.law/alert-White-House-Releases-National-Legislative-Policy-Framework-for-AI

- White House urges Congress to protect children on AI platforms | K-12 Dive, https://www.k12dive.com/news/white-house-urges-congress-to-protect-children-on-ai-platforms/815451/

- OpenClaw; Explained Simply. The open source project Jensen Huang… | by Nisarg Bhatt | Mar, 2026 | Towards AI, https://pub.towardsai.net/openclaw-explained-simply-50fe4af8dcdf

- OpenClaw Explained: The Free AI Agent Tool Going Viral Already in 2026 - KDnuggets, https://www.kdnuggets.com/openclaw-explained-the-free-ai-agent-tool-going-viral-already-in-2026

- Mastering Multi-Platform Distribution: The OpenClaw Playbook for 2026 Creators - Stormy AI, https://stormy.ai/blog/mastering-multi-platform-distribution-openclaw-playbook-2026

- Alibaba (阿里巴巴集团): Latest News and Updates | South China Morning Post, https://www.scmp.com/topics/alibaba

- Unveiling the Popularity of OpenClaw: Novel Issues in Agent, AI Coding, and Team Collaboration - 36氪, https://eu.36kr.com/en/p/3718202953037191

- Baidu joins China's OpenClaw frenzy with new AI agents - The Economic Times, https://m.economictimes.com/tech/artificial-intelligence/baidu-joins-chinas-openclaw-frenzy-with-new-ai-agents/articleshow/129637738.cms

- AI News Briefs BULLETIN BOARD for March 2026 | Radical Data Science, https://radicaldatascience.wordpress.com/2026/03/19/ai-news-briefs-bulletin-board-for-march-2026/

- Oracle Unveils AI Database Agentic Innovations for Business Data, https://www.prnewswire.com/news-releases/oracle-unveils-ai-database-agentic-innovations-for-business-data-302722719.html

- Verily, Samsung Collaborate, Improving AI Trustworthiness, FDA Approval for PathAI, https://www.bio-itworld.com/news/2026/03/24/verily--samsung-collaborate--improving-ai-trustworthiness--fda-approval-for-pathai

- International AI Safety Report 2026 Examines AI Capabilities, Risks, and Safeguards, https://www.insideprivacy.com/artificial-intelligence/international-ai-safety-report-2026-examines-ai-capabilities-risks-and-safeguards/

- The release of the international AI safety report 2026: navigating rapid AI advancement and emerging risks - techUK, https://www.techuk.org/resource/the-release-of-the-international-ai-safety-report-2026-navigating-rapid-ai-advancement-and-emerging-risks.html

- The algorithm will see you now? Patients say not without a doctor nearby | University of Michigan Health, https://www.uofmhealth.org/health-lab/algorithm-will-see-you-now-patients-say-not-without-doctor-nearby

- Mistral Pioneers Sovereign AI in Europe - AI Business, https://aibusiness.com/foundation-models/mistral-pioneers-sovereign-ai-in-europe

- EU AI Act - Updates, Compliance, Training, https://www.artificial-intelligence-act.com/

- AI Governance in 2026: Why Staying Current Is No Longer Optional for Your Business, https://securityboulevard.com/2026/03/ai-governance-in-2026-why-staying-current-is-no-longer-optional-for-your-business/

- AI View: March 2026, https://www.simmons-simmons.com/en/publications/cmmvt9ow200l8tvk49kbfu1nv/ai-view-march-2026

- Code of Practice on marking and labelling of AI-generated content, https://digital-strategy.ec.europa.eu/en/policies/code-practice-ai-generated-content

- European AI: 7 companies and models that decision makers should know - data:unplugged, https://www.data-unplugged.de/en/blog/european-ai-models

- 60 Best AI Startups in Europe To Watch in 2026 [List] - OMNIUS, https://www.omnius.so/blog/ai-startups-in-europe

- The Most Important European AI Tools 2026: The Complete Comparison - EuroBoxx, https://euroboxx.eu/european-ai-tools-comparison/

- Artificial Intelligence - arXiv, https://arxiv.org/list/cs.AI/new

- Trending Papers - Hugging Face, https://huggingface.co/papers/trending

- Artificial Intelligence Mar 2026 - arXiv, https://arxiv.org/list/cs.AI/current

- Artificial Intelligence - arXiv, https://www.arxiv.org/list/cs.AI/pastweek?skip=560&show=2000

- Build It right before you build it big: A 52 week AI adoption plan for law firms - The Florida Bar, https://www.floridabar.org/the-florida-bar-news/build-it-right-before-you-build-it-big-a-52-week-ai-adoption-plan-for-law-firms/

- Key Trends in the Startup and Venture Investment Market on March 24, 2026: AI, Deeptech, and IPO Market - Sergey Tereshkin, https://sergeytereshkin.com/publications/startup-venture-investment-news-march-24-2026

- Video Depositions in the AI Age Is Treacherous for Testimonies - Bloomberg Law News, https://news.bloomberglaw.com/legal-exchange-insights-and-commentary/video-depositions-in-the-ai-age-is-treacherous-for-testimonies

- Gartner Says CFOs Need to Rethink the ROI of AI Investments, https://www.gartner.com/en/newsroom/press-releases/2026-03-24-gartner-says-cfos-need-to-rethink-the-roi-of-ai-investments

- Regulatory Report: March 2026 - Gryphon Ai, https://gryphon.ai/regulatory-report-march-2026/

- AI Today in 5: March 24, 2026 the From Detect to Prevent Edition, https://compliancepodcastnetwork.net/ai-today-in-5-march-24-2026-the-from-detect-to-prevent-edition/

Recommended AI tools

NVIDIA AI Workbench

Code Assistance

Develop, customize, and scale AI anywhere

RunPod

Scientific Research

Empowering Your Text Processing Needs

Deep Live Cam

Image Generation

Seamless real-time face swapping for your live streams and videos with AI

Cerebrium

Code Assistance

Serverless AI infrastructure for high-performance apps

Cirrascale Cloud Services

Data Analytics

Test and Deploy Every Leading AI Accelerator in One Cloud

Denvr Dataworks

Scientific Research

Empowering Data Transformation

About the Author

Albert Schaper is the Founder of Best-AI.org and a seasoned entrepreneur with a unique background combining investment banking expertise with hands-on startup experience. As a former investment banker, Albert brings deep analytical rigor and strategic thinking to the AI tools space, evaluating technologies through both a financial and operational lens. His entrepreneurial journey has given him firsthand experience in building and scaling businesses, which informs his practical approach to AI tool selection and implementation. At Best-AI.org, Albert leads the platform's mission to help professionals discover, evaluate, and master AI solutions. He creates comprehensive educational content covering AI fundamentals, prompt engineering techniques, and real-world implementation strategies. His systematic, framework-driven approach to teaching complex AI concepts has established him as a trusted authority, helping thousands of professionals navigate the rapidly evolving AI landscape. Albert's unique combination of financial acumen, entrepreneurial experience, and deep AI expertise enables him to provide insights that bridge the gap between cutting-edge technology and practical business value.

More from AlbertWas this article helpful?

Found outdated info or have suggestions? Let us know!